Live Streaming Accessibility in 2026: From Compliance to Competitive Advantage

Accessibility in live streaming has moved far beyond its original role as a compliance requirement. What was once treated as an add-on, captions here, translation there, is now becoming a core part of how content is distributed, experienced, and scaled globally.

This shift is not driven by regulation alone. It is driven by how people actually consume content.

More than 1.3 billion people worldwide live with a disability, according to the World Health Organization. But the relevance of accessibility extends well beyond that group. A much broader audience relies on accessibility features every day, often without realizing it.

Consider a few realities of modern viewing behavior:

- A large percentage of video is consumed without sound, especially on mobile

- Viewers frequently watch content in environments where audio is not practical

- A growing share of audiences engages with content in a non-native language

- Global platforms increasingly distribute the same content across multiple regions simultaneously

At the same time, the linguistic imbalance of the internet remains significant. Around half of online content is in English, while the majority of the global population is not fluent in it. That gap represents not just a limitation in access, but a structural barrier to growth.

Accessibility, in this context, becomes less about accommodation and more about unlocking reach.

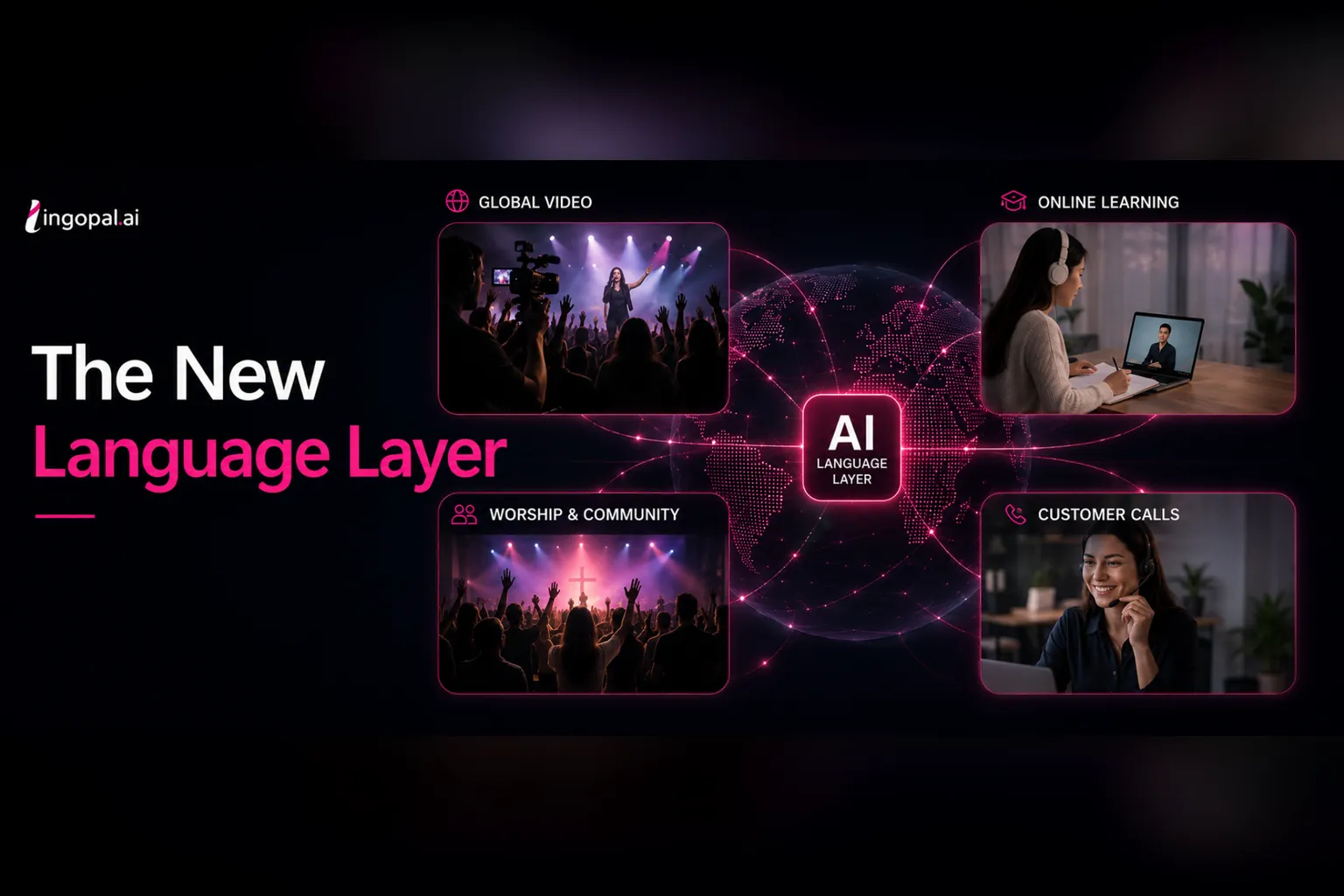

A modern live stream that is truly accessible is built on multiple layers working together in real time. These layers typically include captions, translation, audio descriptions, and an interface that supports assistive technologies. But the real differentiator is not the presence of these features, it is how seamlessly they are integrated.

When these elements operate independently, the experience feels fragmented. Captions may appear instantly, while translations lag behind. Audio descriptions may interrupt key moments. Interfaces may technically function but still be difficult to navigate. The result is friction, even when the intention is correct.

When they are integrated into a single workflow, however, the experience changes completely. Everything stays aligned. Timing is consistent. The viewer does not have to think about accessibility, it simply works.

This is where the limitations of traditional workflows become clear.

Many organizations still rely on separate systems to handle different aspects of accessibility. This often means:

- Multiple vendors or tools for captions, translation, and audio layers

- Additional manual steps to align outputs

- Increased risk of latency differences across features

- Higher operational costs and technical overhead

The issue is not effort, but architecture.

There are essentially two models in play. The first processes accessibility features sequentially, introducing delays at each stage. The second processes a single input stream once and generates multiple outputs in parallel. The latter approach significantly reduces latency, improves synchronization, and simplifies operations.

This architectural shift is one of the most important developments in live streaming today.

Latency, in particular, has become a defining factor in user experience.

In theory, a delay of a few seconds may seem acceptable. In practice, even small delays can disrupt attention. Once latency reaches a certain threshold, often around 10 seconds or more, viewers begin to experience a disconnect between what they see and what they read or hear.

This creates a subtle but important effect:

- Attention is split

- Comprehension becomes harder

- Engagement drops

For live content, where timing is critical, this can significantly impact retention.

Performance metrics help illustrate this. Translation quality is commonly measured using BLEU scores, where scores above 60 are considered comparable to professional human translation. Latency for live dubbing systems is typically measured in seconds, with high-performing systems delivering translated audio within a short delay window while maintaining synchronization with the original stream.

These are not just technical benchmarks. They are thresholds that define whether accessibility enhances or undermines the experience.

User behavior further reinforces the importance of getting this right.

Captions, for example, have evolved from a niche accessibility feature into a mainstream viewing habit. They are widely used by:

- Viewers in sound-sensitive environments

- Mobile users scrolling through content

- Audiences trying to follow fast or complex discussions

- Non-native speakers seeking additional clarity

In many cases, captions are used more frequently by viewers without hearing impairments than by those they were originally designed for.

Translation is undergoing a similar transformation. While subtitles remain valuable, they require continuous attention and cognitive effort. Real-time speech-to-speech translation offers a more natural alternative, allowing viewers to follow content without shifting focus between audio and text.

When combined with voice synthesis that preserves tone, pacing, and speaker characteristics, translation becomes less about converting words and more about delivering a native experience.

Voice plays a critical role here. It carries emotion, emphasis, and credibility. Removing it flattens the content. Preserving it maintains connection.

Regulation is also evolving in parallel with these technological and behavioral shifts.

Frameworks such as ADA, Section 508, WCAG 2.1, and the European Accessibility Act are increasingly aligned around a central principle: accessibility should provide an equivalent experience, not just minimal access.

This raises the bar significantly.

It is no longer enough to provide captions if they are delayed or inaccurate. It is not enough to offer translation if it disrupts the flow of content. Accessibility must be timely, consistent, and fully integrated into the viewing experience.

Organizations that fail to meet this expectation may face not only legal risk, but also reputational and commercial consequences. Beyond compliance, the business case for accessibility is becoming clearer.

Accessibility expands audience reach by removing language and usability barriers. It increases engagement by making content easier to follow in different contexts. It also enables more efficient distribution, allowing a single stream to serve multiple regions without additional production effort.

The impact of localization has already been demonstrated by platforms like Netflix, where multilingual support has driven significant increases in watch time and global audience growth. Live streaming is now moving in the same direction, with accessibility playing a central role.

There are also measurable operational benefits. When accessibility is built into the production workflow, rather than added afterward, organizations can:

- Reduce reliance on manual captioning or translation processes

- Minimize the number of tools and integrations required

- Improve consistency across outputs

- Scale more efficiently across content types and markets

What was once perceived as an additional cost becomes a source of efficiency and competitive advantage.

All of these developments point to a broader shift in how accessibility is understood.

It is no longer a secondary consideration or a compliance checklist. It is part of the infrastructure that enables modern content distribution.

In a world where viewers:

- Switch between devices constantly

- Consume content in multiple languages

- Expect instant access and minimal friction

Accessibility becomes fundamental to how streaming works.

At that point, the distinction between “accessible” and “standard” content begins to disappear. Accessibility is simply what defines a complete experience. And increasingly, it is what defines which organizations are able to grow, scale, and compete in a global, real-time media landscape.